The 1-Second Tax: Why Mobile Speed Is an Architecture Decision

The question every Head of Platform in eCommerce should be putting in front of their CFO this week is not whether to compress more images. It is whether the current hosting and middleware stack can survive the shift to a mobile-majority revenue mix without quietly burning a fifth of paid acquisition spend. The math has changed. The org chart probably hasn't.

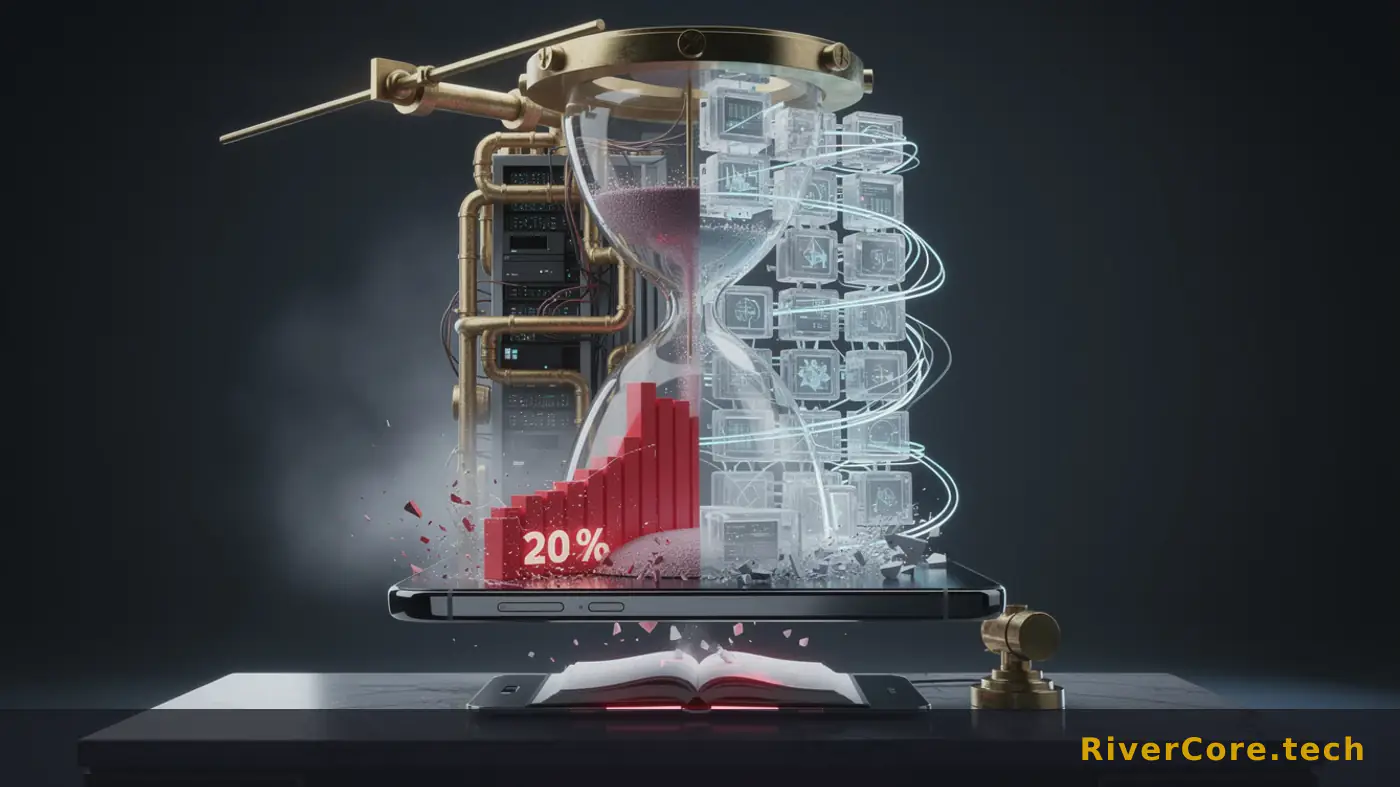

New performance data making the rounds this spring puts a hard number on something platform teams have argued about in roadmap meetings for years: speed is a P&L line, not a Lighthouse score. And the fix lives in places most product orgs don't staff well.

What Happened

As DesignRush reported in early March, performance data from Elementor shows that a one-second delay in mobile page load time can reduce conversion rate by up to 20%. That figure is paired with a long-running Capital One Shopping Research data point: 53% of mobile users abandon sites that take longer than three seconds to load. Neither stat is new in spirit. The combined consequence is.

The structural backdrop matters more than the headline. Mobile already accounts for 57% of global eCommerce sales, and that share is projected to hit 63% by 2028. So the conversion penalty is no longer applied to a secondary channel. It's applied to the channel that pays the rent.

Caleb Bradley, CEO of Bighorn Web Solutions, frames the problem as architectural rather than cosmetic. "Surface fixes can improve a speed score, but they rarely solve the real issue since eCommerce performance problems are often architectural in nature," Bradley said. He goes further on where the damage actually accumulates: "Backend decisions about hosting, caching, integrations, and data flow quietly compound milliseconds across every interaction. That's where revenue leakage begins."

His prescription is equally direct on measurement: "Synthetic tests tell part of the story, but real user performance data reveals where actual friction occurs." And on which pages to instrument: "The goal is to isolate what's slowing down revenue-generating pages specifically on PDPs, cart, and checkout. Auditing just the homepage isn't enough." Translated for a platform lead, that's a polite way of saying most synthetic monitoring dashboards are pointed at the wrong URLs.

Technical Anatomy

Strip away the marketing layer and the failure modes are familiar to anyone who's run a high-traffic commerce stack. Bighorn's diagnosis lands on five recurring bottlenecks, and each one maps to a specific build-vs-buy decision the engineering org probably made years ago and hasn't revisited.

First, overloaded themes and third-party scripts. Frontend templates routinely carry logic that should run server-side, and every additional tag manager entry introduces execution time and dependency chains. Second, weak or missing caching, where the system rebuilds content repeatedly instead of serving optimized versions. Third, inefficient database queries and excessive API calls: a single product view can trigger pricing rules, inventory checks, personalization, and recommendation queries, and that fan-out compounds across thousands of concurrent sessions. Fourth, legacy ERP and middleware integrations that slow dynamic requests, particularly when those systems were never designed for real-time commerce traffic. Fifth, hosting environments tuned for static sites that struggle under dynamic workloads, especially during traffic surges.

The recommended audit list is the engineering takeaway and worth memorizing: Time to First Byte, API call volume per page, third-party script execution time, Largest Contentful Paint, Total Blocking Time, database query efficiency, and hosting performance under peak load. Notice what's missing from that list. There is no mention of image format, no recommendation to swap a plugin, no advice to enable a "performance mode" toggle. The diagnostic surface is firmly in backend territory.

For teams running PostgreSQL or similar relational backends behind a commerce platform, the database query efficiency line is doing heavy lifting. Pricing engines, inventory locks, and personalization joins all live there, and the difference between a properly indexed query plan and a sequential scan is, on a busy PDP, exactly the kind of millisecond compounding Bradley describes. The Postgres docs have covered this territory for two decades. The problem is rarely knowledge, it's ownership.

The frontend prescriptions, deferring non-critical JavaScript, lazy-loading below-the-fold assets, loading personalization and tracking after core content renders, and eliminating redundant applications, are necessary but insufficient. They protect the first meaningful interaction. They don't fix Time to First Byte.

Who Gets Burned

Three categories of teams are most exposed over the next 90 days, and each one has a different cost structure to defend.

The first is mid-market eCommerce platforms running on hosting plans procured by a marketing team three years ago. These setups were optimized for a static-site era, and the bill for that mismatch is now landing on the CMO's CAC dashboard rather than the CTO's infra budget. The political problem is harder than the technical one. Whoever signed the original hosting contract has to admit it.

The second is enterprise commerce orgs sitting on legacy ERP and middleware integrations. Replatforming is a multi-quarter, multi-million-dollar conversation, and the people who own the integration layer are usually not the same people who own the conversion funnel. That's the gap revenue leaks through. A 20% conversion penalty applied to mobile traffic that drives 57% of sales is not a rounding error, it's an existential argument for re-architecting the integration tier or wrapping it behind an async caching layer.

The third group is fintech and iGaming operators with commerce-adjacent flows: deposit pages, KYC checkpoints, cashier flows. The performance economics are identical even if the regulatory wrapper is different, and these teams typically under-invest in real-user monitoring on the exact pages where money changes hands.

The CFO at any of these companies should be asking the VP Engineering one question this week: what percentage of our paid mobile acquisition spend is being burned on bounce before Largest Contentful Paint fires on the PDP? If the answer is "we don't measure that," the conversation is already overdue. The General Counsel at regulated operators has a related question, which is whether session abandonment data is being captured in a way that creates discovery exposure. Both questions belong on the same agenda.

Playbook for Engineering Teams

For platform leads making architecture decisions in the next quarter, the move is to separate the cheap wins from the structural ones and sequence them honestly.

Cheap wins, executable in days: defer non-critical JavaScript, lazy-load below-the-fold assets, push personalization and analytics scripts behind core content render, and audit redundant applications doing similar jobs. These protect first interaction and buy political capital for the bigger fight.

Structural moves, executable in a quarter: implement edge caching through a CDN, optimize database queries and reduce unnecessary API calls, improve Time to First Byte through smarter hosting configuration, and enable object caching where possible. Pair this with real-user monitoring on PDPs, cart, and checkout specifically. Synthetic tests against the homepage are theatre.

The harder conversation is build-vs-buy on the integration tier. If a legacy ERP is the gating factor on dynamic request latency, the question isn't whether to optimize it. It's whether to wrap it in an async event-driven layer and treat the ERP as an eventually-consistent system of record. Reference patterns in the Google Cloud Architecture Framework cover this terrain well, and the hiring market for engineers who can execute that pattern is tight but not impossible.

Bradley's framing is worth keeping on the wall: "Speed is a very real revenue lever. And in eCommerce, milliseconds are often the difference between growth and stagnation." Treat it as a budget line, not a sprint ticket.

Key Takeaways

- A one-second mobile delay can cut conversion by up to 20%, and 53% of mobile users abandon sites slower than three seconds. With mobile heading from 57% of eCommerce sales today to 63% by 2028, the penalty is applied to the primary revenue channel.

- Performance failure is architectural, not cosmetic. Hosting choices, caching gaps, ERP integrations, and database query patterns compound milliseconds across every session.

- Audit the pages that generate revenue: product detail, cart, and checkout. Real-user monitoring on those flows beats synthetic homepage tests every time.

- Measurable backend signals worth instrumenting now: Time to First Byte, API call volume per page, third-party script execution time, Largest Contentful Paint, Total Blocking Time, database query efficiency, and peak-load hosting performance.

- Teams evaluating commerce replatforming should now be asking themselves whether their integration tier is the gating factor on mobile conversion economics, and whether the next hosting contract is a marketing decision or a platform decision.

Frequently Asked Questions

Q: How much does a one-second mobile delay actually cost in conversions?

Performance data from Elementor indicates a one-second delay in mobile page load time can reduce conversion rate by up to 20%. Combined with the finding that 53% of mobile users abandon sites slower than three seconds, the compounding effect on paid acquisition efficiency is significant.

Q: Why isn't auditing the homepage enough for eCommerce performance?

Revenue rarely happens on a homepage. Shoppers convert or abandon on product detail pages, carts, and checkout flows, so those are the URLs that need real-user monitoring. Synthetic tests against a homepage tell you almost nothing about where money is actually leaking.

Q: What backend metrics should engineering teams instrument first?

Bighorn Web Solutions recommends auditing Time to First Byte, API call volume per page, third-party script execution time, Largest Contentful Paint, Total Blocking Time, database query efficiency, and hosting performance under peak load. These signals expose architectural bottlenecks that surface-level speed scores miss.

Observability Crosses the IT/OT Line: MQTT, OPC and the New Telemetry Stack

ATS Network Management argues observability now spans cloud workloads, Kubernetes, MQTT sensors and OPC machine data. The engineering implications are larger than the pitch suggests.

AI SRE Summit 2026: Komodor Forces Hype-vs-Reality Reckoning

Komodor's May 12 AI SRE Summit lines up Honeycomb, Salesforce, and Man Group voices to stress-test the gap between vendor demos and 2am incident reality.

Cloud Native Hits 19.9M Developers: The Plumbing Won

CNCF and SlashData clock the cloud native developer population at 19.9 million, but the real story is that Kubernetes has vanished behind internal platforms.