Moreh Hits A100 Numbers on Tenstorrent, Skips the HBM Tax

Picture a freight yard where every container has been moving on the same gauge of rail for a decade. Then someone shows up with a second track, narrower, cheaper, and points out that half your cargo doesn't actually need the express line. That's roughly what Moreh just did on a stage in San Francisco, except the cargo is LLM tokens and the express line is HBM-stuffed GPUs.

What Happened

On May 1, at Tenstorrent's TT-Deploy event in San Francisco, Santa Clara-based Moreh walked on stage and ran a live LLM inference demo on the Tenstorrent Galaxy Wormhole system. As 巴士的報 reported the following day, the company claimed inference numbers on Galaxy Wormhole that match or surpass NVIDIA DGX A100-class systems across a stack of Mixture-of-Experts models: GPT-OSS, Qwen, GLM, and DeepSeek.

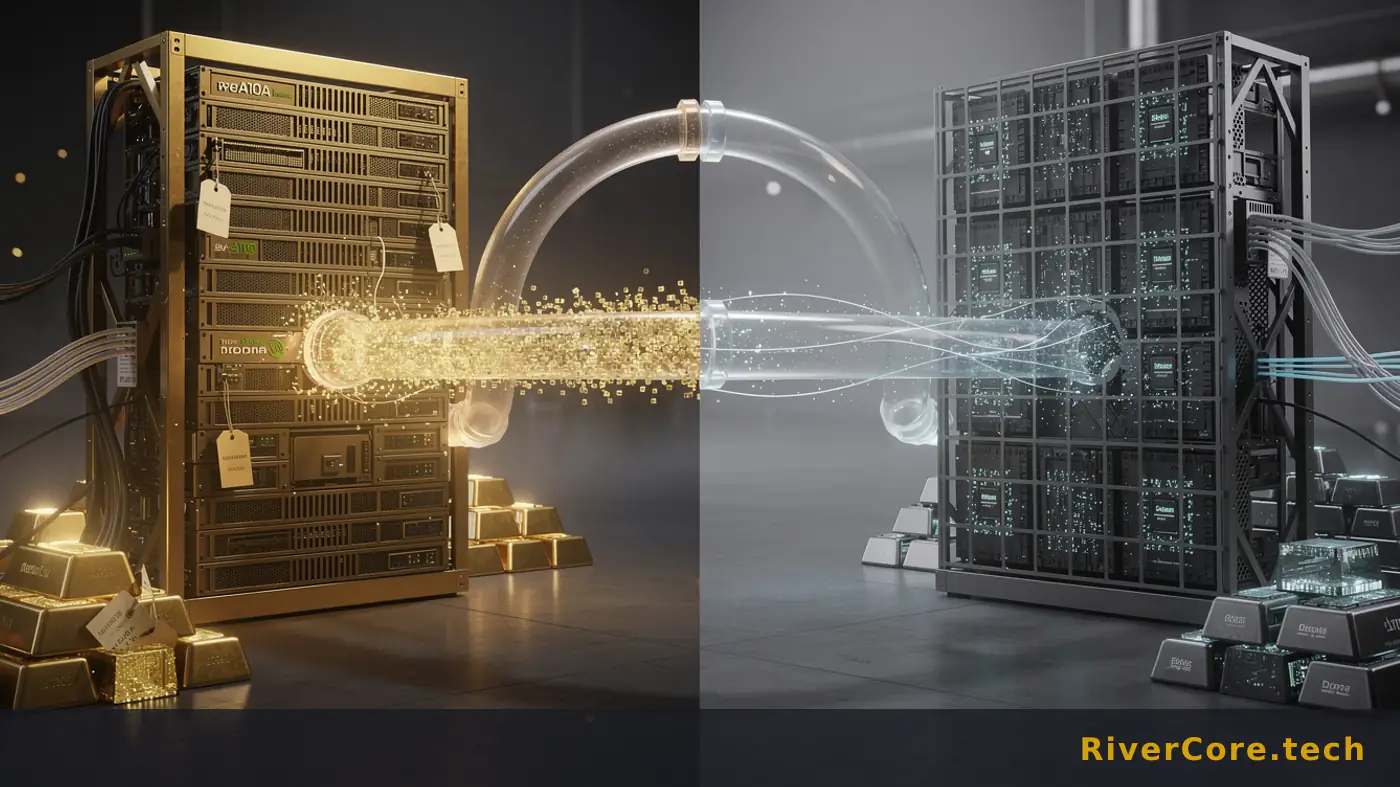

The validation was driven by Moreh's own MoAI Inference Framework. The headline trick is architectural rather than silicon: a disaggregated serving setup that pairs GPUs with Tenstorrent Wormhole chips and uses the Tenstorrent processors as dedicated prefill accelerators. Decode stays on GPUs. Prefill moves off them. The result, per Moreh, is meaningfully reduced reliance on high-cost HBM and a lower overall infrastructure bill.

CEO Gangwon Jo framed it as a milestone. "Achieving production-grade LLM inference performance and stability on Tenstorrent-based systems marks a significant milestone," he said, adding that the team will "continue to enhance performance through deeper optimization across heterogeneous architectures and closer integration with Tenstorrent NPUs."

Context worth knowing: Moreh is a strategic partner of Tenstorrent and a major external contributor to Metalium, Tenstorrent's low-level programming stack. The company already runs AMD GPU-based production environments in real data centers, has a foundation-model subsidiary called Motif Technologies, and lists collaborations with AMD, Tenstorrent, and SGLang. None of which is incidental. You don't get production-grade numbers on novel silicon without years of someone else's debugging baked into your runtime.

Technical Anatomy

To see why this matters, you have to look at what an LLM actually does on a request. There are two phases, and they have personalities as different as a sprinter and a marathoner. Prefill chews through the entire input prompt in parallel: compute-bound, embarrassingly parallel, hungry for FLOPs. Decode generates tokens one at a time, autoregressive, latency-sensitive, and bottlenecked by memory bandwidth, which is why HBM exists in the first place.

The industry's default answer has been: throw both phases at the same GPU and hope your batching strategy hides the mismatch. Anyone who has tuned a vLLM deployment at 2am knows the boring bit: prefill spikes blow your tail latency, and HBM sits underused during long decode tails. You're paying premium memory prices to do compute work.

Disaggregated serving splits the two. Send prefill to a node optimised for compute throughput, hand the KV cache to a decode node optimised for memory bandwidth, and let each do what it's good at. Splitwise and DistServe have shown the academic case. Moreh's contribution is the production wiring on heterogeneous silicon: prefill on Tenstorrent Wormhole, decode on GPUs, all orchestrated by MoAI as a single cluster.

Wormhole's pitch fits this slot well. The chip leans on SRAM-heavy tile architecture rather than stacks of HBM, which is exactly what you want when your job is dense matmul on a known prompt and you don't need to stream a giant KV cache. Use it as a prefill accelerator and the HBM bill, the part where it all falls over financially, drops because you size HBM only for the decode fleet.

The MoE detail matters too. GPT-OSS, Qwen, GLM, and DeepSeek all route tokens to expert subsets, which adds communication overhead but reduces per-token compute. Getting MoE right on a non-NVIDIA backend means handling all-to-all routing across a fabric that wasn't designed for NCCL. That's the guts of it, and that's where contributing to open inference stacks like SGLang pays off.

Who Gets Burned

NVIDIA's monoculture is the obvious answer, but the real exposure is more interesting. The companies that should be sweating are the inference-as-a-service providers who priced their margins on the assumption that DGX-class H100/H200 fleets were the only path to production-grade latency. If a Korean software shop can hit A100-class numbers on cheaper silicon by being clever about request topology, the ground under premium GPU rentals starts to shift.

A100-class is not H200-class, and I'd argue Moreh is being deliberately careful about that comparison. The pitch isn't "we beat the frontier." The pitch is "we hit the workhorse tier at a lower bill of materials." For 80% of enterprise inference workloads (RAG endpoints, internal copilots, batch summarisation), A100-class throughput at lower cost is the actual market.

The next 90 days look painful for two specific groups. First, single-vendor GPU cloud startups whose entire moat is "we have allocation." If heterogeneous clusters become orderable, allocation stops being a moat. Second, infrastructure teams at mid-tier AI companies who built their stack assuming CUDA forever. Their CTOs are going to start getting board questions about hardware diversification, and "we only run on NVIDIA" is becoming a harder answer to defend.

Tenstorrent itself gets a real production reference. Until now, the pitch has been potential. TT-Deploy with a live demo from a partner running real MoE workloads is the first time the company has had something to point at beyond benchmarks. AMD comes out fine: Moreh's framework supports AMD GPUs in the same cluster, which means MI300-class hardware now has a credible inference partner who isn't trying to sell them out for NVIDIA.

Playbook for AI Development

If you're running inference at any meaningful scale, this week's homework is straightforward. Audit your prefill-to-decode ratio. If you don't know what fraction of your GPU-seconds are spent on prefill versus decode, you can't reason about disaggregation, and you're almost certainly over-buying HBM. Most teams discover prefill is 30-50% of compute on long-context workloads, and that's the slice you can route off premium silicon.

Second, stop treating vendor lock-in as theoretical. The MoAI Inference Framework supports NVIDIA, AMD, and Tenstorrent in a single cluster. Whether or not you adopt Moreh, design your serving layer so that swapping a backend is a config change, not a rewrite. SGLang and similar runtimes give you a reasonable abstraction. Use it.

Third, if you're building an agentic product, look at where prefill dominates your bill. Tool-calling agents that re-prefill long context windows on every turn are the worst-case workload for monolithic GPU serving. Patterns documented in Anthropic's docs around prompt caching and tool use only get you so far. The structural fix is disaggregation, and it's now buyable.

Fourth, talk to procurement. Lead times on Tenstorrent Galaxy systems are not lead times on H200s. If your 2026 capacity plan is "wait for NVIDIA allocation," there's now a credible parallel path. Run the numbers on a mixed cluster before your next budget cycle.

Key Takeaways

- Moreh's MoAI Inference Framework hit DGX A100-class numbers on Tenstorrent Galaxy Wormhole across GPT-OSS, Qwen, GLM, and DeepSeek MoE models, validated live at TT-Deploy on May 1.

- The architecture splits prefill onto Tenstorrent and keeps decode on GPUs, cutting HBM dependency and overall infrastructure cost.

- MoAI runs NVIDIA, AMD, and Tenstorrent silicon in a single cluster, which is the first production-grade answer to vendor lock-in I've seen actually demoed rather than slide-deck'd.

- The exposure is to single-vendor GPU clouds and CUDA-only inference stacks, not to NVIDIA's frontier silicon, which still sits unchallenged at the top end.

- Audit prefill-versus-decode split this week, and design your serving layer so backend swaps are config-level, not architectural.

Back to the freight yard. The express line still runs, the H200s aren't going anywhere, and frontier training is still a CUDA story for the foreseeable future. But the second track is open for business, and the cargo that doesn't need the express ride just got a cheaper option. That's the part the hyperscalers will notice first, and the part the rest of us should be planning around.

Frequently Asked Questions

Q: What is disaggregated LLM serving and why does it matter?

Disaggregated serving splits the two phases of LLM inference, prefill (processing the input prompt) and decode (generating tokens), onto separate hardware optimised for each. Prefill is compute-bound, decode is memory-bandwidth-bound, so running them on the same GPU wastes resources. Splitting them lets you size each fleet independently and use cheaper silicon for prefill.

Q: Does this mean Tenstorrent matches NVIDIA's flagship H100 or H200 chips?

No. Moreh's claim is matching or surpassing DGX A100-class performance, which is NVIDIA's previous-generation workhorse tier, not the current H100/H200 frontier. The pitch is cost-efficient parity at the enterprise inference tier, not displacing top-end training silicon.

Q: Can teams use the MoAI Inference Framework today across mixed hardware?

Per Moreh, MoAI supports unified operation of NVIDIA, AMD, and Tenstorrent processors within a single cluster, and the company is a strategic partner of Tenstorrent and a major contributor to Metalium. Production readiness on Tenstorrent was just validated at TT-Deploy on May 1, 2026, so adoption timelines for outside teams will depend on direct engagement with Moreh.

The Claude Code Story We Can't Actually Verify Yet

The only available source for this Claude Code story is a browser verification page, zero extractable facts. Here's what that absence itself tells AI buyers.

The Anthropic vs OpenAI Revenue Story We Cannot Verify Yet

A headline claiming Anthropic overtook OpenAI in LLM revenue share is making rounds, but the underlying source is currently gated behind a browser check. Here is what that means.

X Rebuilds Ad Stack on xAI: $2.46B Target for 2026

X just shipped a rebuilt ad platform on xAI ranking models, chasing eMarketer's $2.46B forecast for 2026. That's an 8.8% step up from 2025 in a market Google and Meta dominate.